It’s 2020 and if you throw a stone, you’re likely to hit a vendor that offers some sort of visibility to its customers through listening to network traffic. Whether they’re focused on IoT, operational technology (OT), medical devices or basic anomaly detection, you can find many vendors that have taken Network Taps for SPAN ports, sprinkled on some vanilla machine learning and are calling the result “visibility.”

Visibility is, of course, a critical element of security – we can’t properly defend what we can’t see. At its core, visibility is about providing data and then organizing, analyzing and visualizing that data in a way that helps defenders or automation make decisions. The evolution we’ve now reached is not only about collecting this data, but also being able to put it to use through control. In that sense, visibility data is the “new oil” of security programs, as it can serve as the foundation for any technology to build policy or rules against.

Nearly every CIO, CISO and industry peer we talk to wants to be able to put their visibility data to use for control. The challenge is, in many cases it can’t be done. Control is hard and it requires conviction in the accuracy of visibility data.

Achieving control means being able to take the visibility outcomes of devices, align them to policy, and then translate those policies across the various infrastructures that these devices connect to in an automated and continuous way. Control in modern times also isn’t an on-off switch. Organizations need to maintain a discrete understanding of the grey areas, as well, such as implementing a minimum level of access or access only to specific areas of the network. This part of the job is where visibility gets teeth, letting organizations take on a true active defense posture and convert the value of data into the actual reduction of risk.

Where control can fall short is when organizations don’t have confidence, which you get by having absolute understanding of what’s on your network and how it interacts across the enterprise. True confidence stems from a combination of passive and active visibility, the combination of which allows for controls and an active defense strategy that is both effective and comprehensive.

Active Versus Passive Visibility

Many of the start-ups in the device visibility and control market rely on analyzing network traffic captured from SPAN ports and sensors placed around the network. This isn’t a problem for a small organization (less than 500 employees) because it usually isn’t too hard to find the right places to put sensors to capture a sufficient amount of traffic to make confident decisions. But, for large organizations this often proves to be a challenge or impossible altogether. For example, a small or medium business could deploy 25 sensors and get reasonable visibility, but a Global 2000 company could need 10,000 or more to achieve the same effect. As the organization grows, this quickly becomes cost prohibitive or logistically challenging.

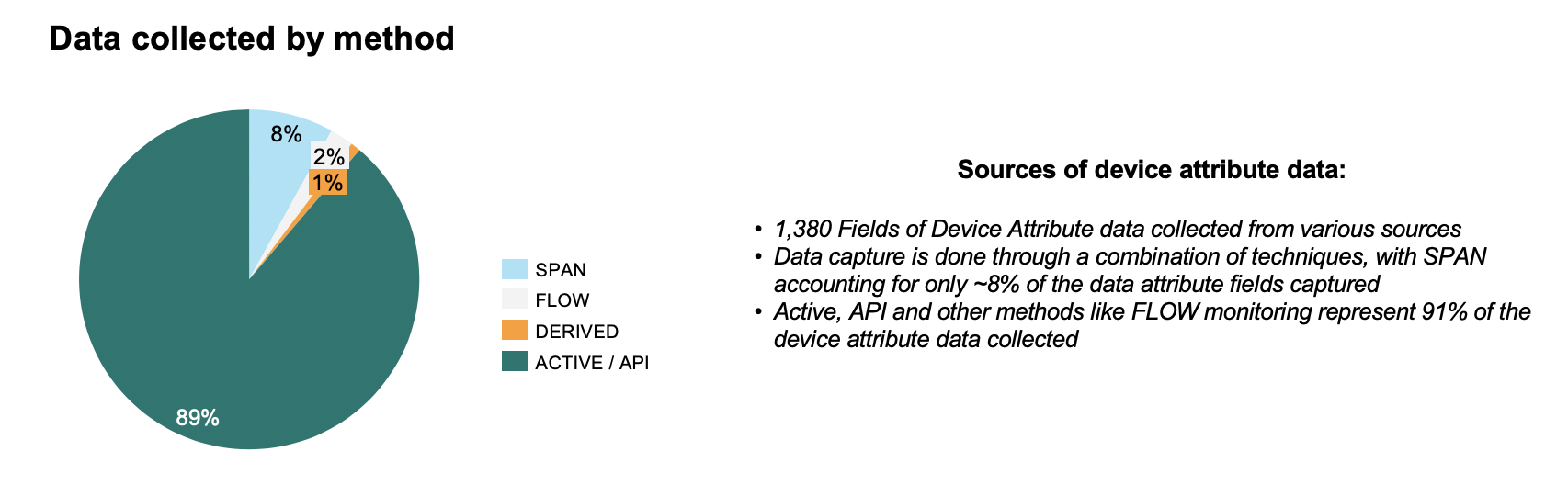

Relying on network traffic alone as the primary or only method of deriving intelligence also neglects the fact that most modern devices communicate over encrypted channels or through protocols which provide very little to no indication as to what the device is. At Forescout, for instance, of the 1,380 unique attributes we collect about devices, only 111 of them are learned from listening to network traffic. The other 1,269 attributes are learned through methods other than SPAN port integration which ranges from active probes, Radius, WMI, Switch Integration, API Calls, Integrations with Operating Systems, Flow Analyzers, etc. This gap will likely only increase as encryption technology enhances (i.e. TLS 1.3), similar to how we see MITM Proxies or CASB technologies struggle.

Figure 1: Data collected by Method from the Forescout Platform

Finally, focusing intelligence gathering exclusively on network traffic allows for malicious actors to more easily impersonate a device by replaying its traffic. This could trick the visibility tool to make inaccurate assumptions about that device, letting physical MITM attacks or network sniffing to go almost unnoticed. For example, the profile for an IoT device like an IP camera could normally be comprised of specific ports and amounts of data exchanged over known protocols like RTSP. It would be easy for a visibility tool to classify anything exhibiting those patterns is a camera. But it would be just as easy for an attacker to mimic those same traffic patterns to fool the system into allowing it on the network if it relies on SPAN ports as its only source of data.

“Of the 1,380 unique attributes we collected about devices, only 111 of them are learned from listening to network traffic.”

Passive Visibility: What You’re Missing

When you don’t have the full picture, you often don’t know what you’re missing. That gap in understanding can be catastrophic for your organization without you even realizing it. Getting a truly complete picture of a device, including how it connects and behaves, requires combining many different inputs of information, with more sources resulting in increased accuracy of attributes used for classification, categorization and security hygiene.

In our same example of the IoT camera, utilizing other sources like PoE utilization from a switchport, active banner assessment from open ports and techniques such as NAT detection (patented by Forescout) can significantly increase a security leader’s confidence that the device is truly an IoT camera, and not an attacker impersonating one.

Attributes of a device can include IP Address, MAC Address, DHCP Options Fingerprint, Missing Windows Patches, TCP SYN/ACK Fingerprints, CDP Flags, POE Power Draw, and more. At Forescout, we collect up to 1,172 unique attributes about devices connected to the network. This feeds our ability to classify, categorize, and understand the health and hygiene of these devices.

A larger sample set also leads to more accurate device fingerprints. Many “visibility” vendors use off-the-shelf machine learning tools, like Tensorflow, to train basic machine learning models in the customer network. The challenge with training in the customer network is the intelligence is only as good as the completeness with which you can capture the clean baseline posture. If the network already has devices behaving badly, those devices would be baselined as normal.

Meanwhile, leveraging a crowdsourced data lake of device intelligence across many sources and real-world environments allows for ML to generate a better baseline by comparing devices across many real-world use cases and organizations. Building a crowdsourced data lake is easy so long as you have a large set of data sources, as well as the ability to collect and centralize that data. As is true in many cases, the recipe to success for ML lies in the amount of data the models have to train against. The challenge for many pop-up visibility vendors is the lack of large enterprise customers or the sheer number of actual deployments to source into their data lakes.

Confident Control Requires Active Visibility

Technologies like next-generation firewalls, software defined networks and cloud computing offer lots of options to segment devices, minimalize lateral movement or ultimately reduce risk. The challenge is that these methods require the security teams to constantly maintain access rules, which are comprised of constantly dynamic IP addresses attached to many varying device types. In a world with billions of connected devices, with a massive percentage of which are unmanaged, it is simply not possible for security teams to keep these rules consistently current and accurate.

While automating these investigations may seem like the logical next step, you need confidence in the underlying data to make that decision. That confidence to set the right control policies can only happen when you have confidence in the underlying visibility data. You can find many products in the market that provide you with some sort of visibility, which is usually based on analyzing traffic and trying to infer what a device is or what it is doing by passively observing its behavior. While valuable, this method is woefully incomplete and often leads to false positives or false negatives. IT leaders don’t want another blinking check engine light they have to investigate. They want answers and actions.

Active Visibility, by comparison, combines extensive observation with the active and ongoing interrogation of devices to provide a more complete and holistic visibility picture with which to implement control. A simple analogy is that a box of bananas and a box of explosives can look, feel, and even weigh the same amount to a passive observer. However active assessments like the X-Rays and other scans done by the Post Office or carriers can show what’s really inside. Passive visibility gives us a picture of the world as it appears, active visibility gives us a picture of the world as it actually is.

On top of that, we live in a multi-vendor world. Even if you buy all your equipment from one vendor, it is almost guaranteed there will be a part of your environment where you need to manage policy through a different console or tool. For example, you can choose Cisco Wired, Wireless, Firewalls and Data Center SDN, but what about your public cloud? This adds another layer of complexity to the equation. Building and maintaining integrations with 100’s of vendors infrastructure products is required to make control a reality but is not trivial.

The Bottom Line

In each of these cases, what defines good visibility and control from bad is confidence. While there are certainly still use cases for visibility tools for asset inventory or license audits, passive visibility alone lacks the precision for a true active defense security posture. Organizations need to be able to have the full picture from 20 different sources of data, not just one. Only then will they have the real visibility they need to close the security circuit with control and limit the risk exposure of their organization.